At most of the machine-learning conferences and workshops I attended over the past 5 years, 90% of the speakers shared incredible feats of accomplishments using supervised learning, while maybe only 10% of the speakers mention unsupervised learning. Google Scholar Search shows close to 1.3 million publications that mention supervised learning and a half a million publications that mention unsupervised learning. Along these lines, when searching for peer-reviewed publications on OnePetro, 120 results mention supervised learning, while only 50 mention unsupervised learning. Supervised learning has garnered widespread commercial implementations, while unsupervised learning has stayed on the sidelines.

Supervised learning is narrowly focused on computing a data-driven model (a function) that relates the features (descriptors or attributes) to the targets (labels). Being narrowly focused on mapping features to targets, supervised learning has many commercial applications; however, such learning lacks the capability to generate new insights and knowledge. In contrast, unsupervised learning discovers the inherent structures in unlabeled data, thereby helping generate new insights and actionable knowledge from large volumes of data.

By quantifying similarities, differences, lower-dimensional representations, and associations present in data without the need for human intervention, unsupervised learning can help discover new data-driven perspectives that aid rapid decision-making. Popular use cases of unsupervised learning include exploratory data analysis, data visualization, anomaly detection, topic categorization, data segmentation, feature extraction, structure discovery, and recommendation engines.

Common unsupervised learning approaches include:

- Exclusive or overlapping clustering based on statistical, optimization, hierarchical, and probabilistic methods

- Association rules

- Dimensionality reduction and compression (e.g., principal component analysis, singular value decomposition, and autoencoder)

Real-world implementation of unsupervised learning, in general, faces the following challenges:

- Computational complexity when the data volume and data dimensionality increase

- Higher risk of inaccurate, uninformative, and irrelevant result

- Extensive human intervention required to validate and understand results

Use of Unsupervised Learning on Subsurface Data

The use of supervised learning on subsurface data is constrained by the availability of targets/labels associated with the measured signals. Consequently, unsupervised learning methods are valuable for analyzing subsurface data. Unsupervised learning helps discover hidden relationships, generate new insights, and identify new patterns when working with unlabeled data sets acquired in complex geological systems.

Case Study: Clustering of Time-Lapse Electrical Resistivity Tomography (ERT) Measurements for Rapid Visualization of a Subsurface Geological Carbon-Storage Reservoir

As an example of the use of unsupervised learning on subsurface data, we developed an unsupervised spatiotemporal clustering work flow to process the ERT measurements acquired in a subsurface geological carbon-storage reservoir over 90 days. This unsupervised learning work flow is described in detail in Gonzalez and Misra (2022a). The work flow is based on initial findings presented in Gonzalez and Misra (2022b).

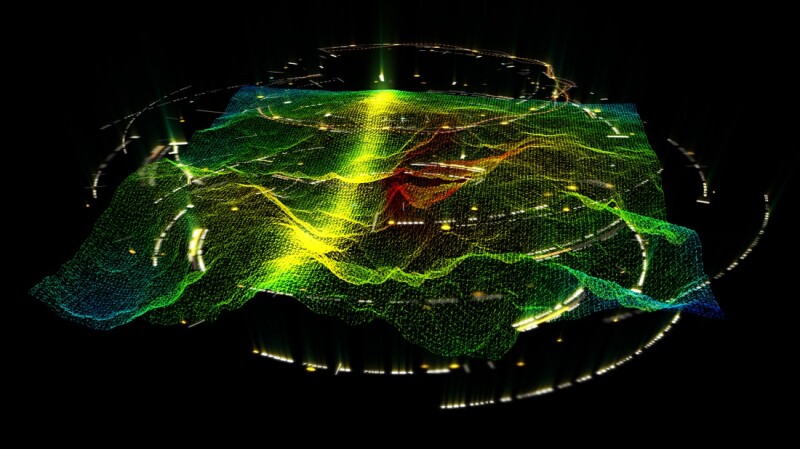

Using dynamic time wrapping (DTW) K-means clustering, four distinct spatiotemporal clusters were identified in the CO2-storage reservoir (Fig. 1). Davies-Bouldin, Calinski-Harabasz, and DTW-silhouette scores are used to evaluate the performance of the proposed spatiotemporal clustering. The four clusters identified using DTW K-means clustering exhibit an average Davies-Bouldin (DB) index of 0.71, Calinski-Harabasz (CH) index of 262,791, and DTW-silhouette score of 0.58. Unlike traditional clustering methods, the DTW K-means incorporates a temporal distance metric. Compared with DTW K-means, traditional clustering methods such as agglomerative and mean-shift clustering exhibit a lower performance, with DB indices of 0.95 and 1.01, respectively, and CH indices of 131,593 and 69,438, respectively. More details can be found in Gonzalez and Misra (2022a).

Metrics for Evaluation of Spatiotemporal Clusters

An important requirement for robust clustering is to determine the optimal number of clusters in the data set. The clusters should be consistent and robust. The performance of the clustering work flow can be quantified using several metrics, such as Davies-Bouldin, Calinski-Harabasz, and DTW-silhouette scores, also referred as internal validation measures. These metrics assess the goodness of the data partitioning without the use of any external information. These scores serve as heuristic tools to evaluate the clustering performance. These scores are based on the compactness (similarity) of each cluster and the separation (distinction) between the clusters. A higher value Calinski-Harabasz score and lower value Davies-Bouldin score indicate a robust clustering. For the DTW-silhouette score, the range of performance is set from −1 to 1, where 1 represents the best clustering performance.

Need of Clustering for Spatiotemporal Characterization of a Geological Carbon-Storage Reservoir

Conventional method for locating/assessing the injected CO2 plume in the subsurface assumes a geophysical model to assess the spatial distribution of CO2 content. The geophysical model is specific to sensor configuration, sensor type, engineering design, and reservoir properties. The assumed geophysical model may not be applicable to all types of CO2-injection reservoirs and scenarios. We developed a novel unsupervised learning methodology based on spatiotemporal clustering for the timelapse monitoring of the CO2 plume in the subsurface as CO2 is being injected into a geological carbon-storage reservoir. This data-driven approach is adaptive and scalable without incorporating a predefined geophysical model. The unsupervised learning facilitates sensor-agnostic, geophysical-model-free, rapid monitoring of the CO2 content and distribution in subsurface. Benefits of using unsupervised clustering compared with traditional geophysical models include:

- Clustering can process sensor data irrespective of the sensor types, transmitter/receiver configurations, sensor-data processing, engineering designs, CO2 injection schedules, and geological properties of the CO2 injection reservoir.

- Rapid spatiotemporal monitoring of CO2 plume movement can be achieved for any type of geophysical data acquired from any type of geological carbon storage site without requiring a specific assumption of the geophysical model or specific source-sensor configuration.

- The unsupervised work flow will allow pathways for improved assimilation of expert domain knowledge in the form of physical interpretations and the infusion physical principles.

References

Gonzalez, K., and Misra, S., 2022a. Monitoring the CO2 Plume Migration during Geological Carbon Storage using Spatiotemporal Clustering.