The diagnostic fracture injection test (DFIT), also called a mini-frac, has helped steer the completion designs of countless horizontal wells over the years. However, an ongoing industry study is about to give engineers a new, and possibly significantly more accurate, way to use it.

As a result of the project, “What I believe is going to happen, is that in 3 years everyone will have completely changed how they interpret the test,” said Mark McClure, the founder and chief executive officer of ResFrac Corp.

Though McClure’s company is less than 3 years old, its unique modeling code is playing a central role in the study, which counts several of the biggest shale players as participants: Apache, Equinor, ConocoPhillips, Hess, Shell, and service provider Keane Group.

Their work amounts to a year-long investigation of why DFITs data is often misunderstood by conventional modeling technology, that among other things, has led to big overestimations of what a shale formation’s true permeability is. Permeability calculations are the group’s primary focus because of the heavy-weight they have on horizontal well and fracture cluster spacing—two of the biggest design criteria in the sector’s quest for shale optimization.

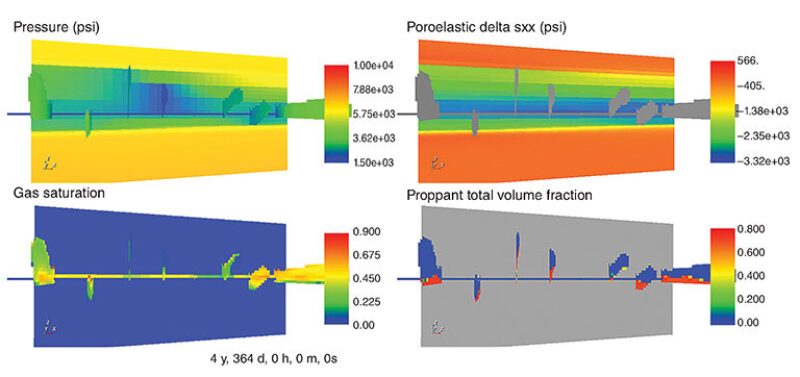

Palo-Alto-based ResFrac (formerly known as McClure Geomechanics) was tapped for the study, which began in January, in part because it has developed what it says is the first integrated reservoir and hydraulic fracture model. Traditionally, the unconventional business has used separate fracture and reservoir models, then combined the results. Though common practice, this is an iterative process that represents a constant trade-off in the accuracy of key assumptions.

By contrast, ResFrac says it is able to retain all of the physics happening at both those micro and macro levels in a single environment, leading to a more complete explanation of tight-rock complexities.

When it comes to DFITs, the study group is using this model to understand why it has seen inaccurate permeability estimates in the past, and soon will publish its workflow through the SPE in what will be the equivalent of a mathematical “cookbook” for other engineers.

Getting Closer to Reality

McClure recalled scrutinizing a conventional interpretation of DFIT data that turned in a formation permeability estimate of 1 microdarcy (or 1,000 nanodarcy). However, history matching on this data set was used by the well’s operator to conclude that the real figure was more likely between 10–20 nanodarcy.

The study’s new interpretation split the middle with an estimate of 15 nanodarcy—and the ResFrac modeling work provided the explanation for why the conventional interpretation was so off base.

In this case, the high-figure was thrown out and never used for a completion, but if such results were to be acted on, “That could lead you to make a decision that’s tremendously suboptimal from an economic perspective,” McClure said.

Complicating matters is that even if a permeability estimate is wrong, or very wrong, it can still be masked in a conventional model by assuming that effective fracture lengths (the portion of a fracture actually producing hydrocarbons) are longer or shorter than they are in reality. If this happens, then operators might believe that they need to space fracturing clusters too wide, decisions that could end up bypassing reserves.

Why a Tiny Transition Matters

What happens in a DFIT is the key to understanding the research breakthrough.

DFITs are seen as the last opportunity for operators to acquire direct reservoir measurements from a new well before the hydraulic fracturing treatment, which generally makes up two-thirds of the well’s total cost. In that fresh well, shale producers will pump down about 10 bbl of fluid into the lateral’s toe section. With enough force, this opens up a small fracture, the pressure response of which is then constantly monitored by downhole gauges for days or weeks.

Through this, engineers are trying to see what happens as that small fracture opens, fluids leak off into the reservoir, and then how it closes back up. The pressure signals are then modeled to predict what might happen when full-sized fractures are induced later.

While conventional models—a fracture simulator and reservoir simulator, respectively—can sufficiently describe these pre- and post-closure effects, “the problem is that there is a transition,” McClure explained.

As the fracture walls close (meaning they are not wide open but also not fully shut) they come into contact while leaving a tiny aperture. Within this crack, some important interactions between rock and fluids are taking place for days. If those actions are better understood, then the research project aims to prove that a much more accurate picture of formation permeability can be drawn out of the data.

McClure noted that in keeping with the spirit of noncommercial research, when the methodology is released (possibly in early 2018) it will not be dependent on his company’s or any other’s numerical simulators—they can simply follow the plots and equations to get more reliable answers from DFITs.

Earlier research in this area has challenged another aspect of traditional DFIT interpretations; details of which were published in the peer-reviewed 2016 SPE Journal (SPE-179725). McClure worked on the project when he was an assistant professor at the University of Texas at Austin’s petroleum engineering school, along with other faculty and experts from ConocoPhillips. He said they showed how DFITs data has historically been used to derive fracture closure pressure calculations that are also “frequently, and substantially inaccurate.”